NVIDIA GTC 2026: An AI Architect's Breakdown of Vera Rubin, Groq LPUs, and the Agentic Infrastructure Shift

Intelligence DispatchesMarch 17, 202612 min read

NVIDIA GTC 2026: An AI Architect's Breakdown of Vera Rubin, Groq LPUs, and the Agentic Infrastructure Shift

Seven chips, five racks, one $1 trillion bet. A technical breakdown of NVIDIA GTC 2026 — Vera Rubin, Groq 3 LPUs, Dynamo, NemoClaw — and what enterprise AI architects should do next.

🎯

Reading Goal

Understand the architectural significance of every major GTC 2026 announcement and what to do about it

TL;DR: NVIDIA GTC 2026 unveiled Vera Rubin — seven chips, five rack types, 3.6 exaflops, 700 million tokens per second. The Groq LPU acquisition splits prefill and decode for 35x tokens-per-watt improvement. Dynamo 1.0 is the inference OS. NemoClaw provides the agentic execution layer. The message is clear: inference is the new bottleneck, and NVIDIA is building the full stack to own it. Here's what matters if you're building production AI systems.

Why GTC 2026 Is Different

GTC 2025 was about training. GTC 2026 is about inference and agentic AI.

That distinction matters. Training is a batch process — you do it once, then deploy. Inference runs continuously in production. Agents make it worse: every reasoning step, every tool call, every multi-turn loop multiplies inference demand. Jensen Huang walked onto the SAP Center stage on March 16 and laid out NVIDIA's answer to this: a vertically integrated platform spanning chips, racks, networking, and software — all designed for a world where AI doesn't just answer questions but takes action.

"The agentic AI inflection point has arrived." — Jensen Huang

The scale of the ambition: $1 trillion in projected purchase orders through 2027 between Blackwell and Vera Rubin. Last year, that number was $500 billion. NVIDIA doubled it in twelve months.

Vera Rubin: The Seven-Chip Platform

The centerpiece: Vera Rubin, a full-stack computing platform comprising seven chips, five rack-scale systems, and one AI supercomputer. This isn't a GPU announcement — it's an entire computing architecture designed from the ground up for agentic inference.

| Component | Specification | Architect Implication |

|---|---|---|

| 72 Rubin GPUs | HBM4, 22 TB/s memory bandwidth | Long-context agent reasoning without memory bottleneck |

| 36 Vera CPUs | Agentic data movement | CPU-side agent orchestration; less GPU waste on control flow |

| ConnectX-9 SuperNICs | Scale-out networking | Multi-rack agent deployments with acceptable latency |

| BlueField-4 DPUs | Network-level processing | Security and observability offloaded from compute path |

| NVLink 6 | 260 TB/s aggregate bandwidth | In-rack model parallelism at scale impossible two years ago |

The Numbers That Matter

Three metrics with real architectural meaning:

3.6 exaflops — This exceeds the combined power of the top 200 supercomputers from 2020. Put in context: real-time multi-agent simulation at enterprise scale becomes feasible. Not just bigger models — more agents running simultaneously.

700 million tokens per second vs 2 million for x86+Hopper — A 350x throughput improvement changes the economics of agent-based architectures. Multi-turn reasoning loops that were cost-prohibitive become viable. The difference between "we can afford 3 agent hops" and "we can afford 300" is the difference between a chatbot and an autonomous system.

10x performance per watt vs Grace Blackwell — Data center power constraints are real. Enterprises that hit power ceilings with Blackwell can now do 10x more within the same power envelope. Sustainability stops being a talking point and becomes a competitive advantage.

The Vera Rubin NVL72 weighs roughly 4,000 lbs and houses 1.3 million individual components across 18 compute trays and 9 NVLink switch trays. It ships in the second half of 2026.

The Groq Acquisition Changes Inference Economics

This is the most architecturally significant announcement for practitioners.

NVIDIA unveiled Groq 3 — the first chip from its $20 billion Groq acquisition — and integrated it directly into the Vera Rubin rack architecture. The Groq 3 LPX rack holds 256 LPUs and sits beside the Vera Rubin rack-scale system.

Splitting Prefill and Decode

The key insight: prefill and decode are fundamentally different workloads, and running both on the same hardware is wasteful.

- Prefill (processing the prompt, building the KV cache) is compute-bound. It benefits from massive parallelism. Stays on Rubin GPUs.

- Decode (generating tokens one at a time) is memory-bandwidth-bound. It benefits from deterministic, high-bandwidth execution. Moves to Groq LPUs.

- Dynamo handles orchestration between the two — routing work to the right hardware automatically.

The result: 35x improvement in tokens-per-watt. Not 35% — 35 times.

What This Means in Practice

For a production agent system processing 10 million agent-turns per day, the cost reduction is dramatic. Multi-turn agent loops that consumed $50K/month on Blackwell-only inference could drop below $5K on Vera Rubin + Groq. That changes which AI products are economically viable.

Note that 256 LPUs per rack is a density play, not just a speed play. NVIDIA is targeting the physical infrastructure constraints that enterprises actually hit.

Dynamo 1.0: The Missing Inference OS

Dynamo is NVIDIA's answer to the question: who orchestrates inference across heterogeneous hardware?

Think of it this way: Kubernetes did for containers what Dynamo aims to do for inference. When you have GPUs, LPUs, DPUs, and CPUs all participating in a single inference pipeline, something needs to manage the routing, scheduling, and optimization across that heterogeneous stack.

Dynamo 1.0 entered production at GTC, delivering a 7x inference boost on current Blackwell hardware — before Vera Rubin even ships. For architects running NVIDIA Triton or TensorRT-LLM today, Dynamo is the natural next abstraction layer up.

This is what Jensen meant by "the operating system for AI factories." Not a marketing phrase — a literal runtime that manages disaggregated inference workloads across mixed hardware.

NemoClaw and OpenClaw: NVIDIA's Agentic Operating System

Jensen described NemoClaw as "an open-sourced operating system of agentic computers." Combined with OpenClaw, it handles agent tool use, planning, and execution as a managed runtime layer.

Nemotron 3 and Enterprise Adoption

The underlying model, Nemotron 3, now ranks in the top 3 globally and has been adopted by CrowdStrike, Cursor, Perplexity, and ServiceNow. Those aren't experimental deployments — they're production integrations at companies processing millions of requests daily.

How It Layers With Your Agent Stack

If you're using LangGraph, CrewAI, or AutoGen today, NemoClaw is not a replacement. It's a lower-level runtime layer. Think of it as: your orchestration framework handles workflow logic on top, NemoClaw handles tool dispatch and execution underneath, optimized for NVIDIA hardware by default.

The strategic play is subtle but important: if your agents run on NemoClaw, they're automatically optimized for NVIDIA's inference stack. The lock-in is real, but so is the performance advantage.

DGX Spark and DGX Station for local agent development signal that NVIDIA wants to own the development loop, not just production deployment. Build locally, deploy to Vera Rubin at scale.

Jensen's proclamation: "Every SaaS company will become AaaS" — Agent-as-a-Service. Whether that's aspirational or inevitable depends on your industry, but the infrastructure to make it possible is shipping this year.

For deeper context on how agent orchestration frameworks compose, see my breakdown of production agentic AI systems and multi-agent orchestration patterns.

Physical AI Comes to Life

If CES 2026 was the thesis, GTC 2026 is the proof of execution.

110 robots were demonstrated on the GTC show floor. Cosmos 3, the next generation of NVIDIA's world foundation model, now provides the simulation backbone for training robots in synthetic environments. Disney showed an Olaf robot running NVIDIA's Newton physics engine — the kind of demo that looks playful but demonstrates real-time physical reasoning.

On the autonomous vehicle front, BYD, Hyundai, Nissan, Geely, and Isuzu are building Level 4 autonomous vehicles on NVIDIA's Drive Hyperion platform. Uber announced partnership integration. Jensen declared the "ChatGPT moment of self-driving cars has arrived" — meaning the cost curve and model capability have crossed the deployment threshold simultaneously.

In healthcare, Roche is deploying 3,500+ Blackwell GPUs for drug discovery. Physical AI isn't just robots and cars — it's molecular simulation, protein folding, and computational biology at scale.

What Enterprise AI Architects Should Do Next

Five concrete actions based on what was announced:

1. Audit your inference architecture. If you're running monolithic GPU inference, the prefill/decode split is coming. Start isolating these stages in your pipeline now so the migration to Dynamo is clean.

2. Evaluate Dynamo compatibility. If you're on Triton or TensorRT-LLM, Dynamo is the natural evolution. If you're on a non-NVIDIA inference stack, assess the lock-in risk versus the performance gap.

3. Revisit agent cost models. 700 million tokens per second at 10x better performance-per-watt changes the math on multi-turn agent loops. Recalculate your cost-per-agent-task projections with Vera Rubin pricing.

4. Watch the NemoClaw/framework integration. CrowdStrike and ServiceNow adoption signals enterprise readiness. Monitor how NemoClaw composes with LangGraph and CrewAI before committing to a framework.

5. Start procurement conversations. Vera Rubin ships H2 2026. Azure is first. AWS has committed over a million GPUs plus Groq LPUs. Cloud availability will be the bottleneck, not hardware readiness.

For a structured approach to these decisions, see What is Agentic AI? and the Agentic AI Roadmap 2026.

The Bigger Picture: Jensen's Three-Year Roadmap

Beyond Vera Rubin, Jensen laid out a clear technology roadmap that gives architects planning visibility through 2028:

Kyber Rack Architecture (2027) — 144 GPUs oriented vertically instead of horizontally, scaling to an NVL1152 supercomputer using direct optical interconnects. Ships with Vera Rubin Ultra as NVL144, giving customers three options: NVL72, NVL144, and the flagship NVL576.

Feynman Architecture (2028) — A new GPU, new LPU (LP40), new CPU (Rosa, named for Rosalind Franklin), and BlueField-5 DPU. Built on TSMC's 1nm-class A16 process. The first NVIDIA architecture rumored to replace copper interconnects with silicon photonics — using light to transmit data between chips.

Vera Rubin Space-1 — Yes, NVIDIA is putting data centers in space. Early-stage, but it signals the scale of thinking.

One telling detail from the keynote: "100% of NVIDIA is using Claude Code." When the company building the world's AI infrastructure chooses an AI coding assistant for internal development, it validates the agentic workflow thesis they're building hardware for.

The cadence is clear: annual platform leaps, each compounding on the previous. The 10-year perspective Jensen offered — 40 million times more compute in a decade — isn't a projection. It's a roadmap with named chips attached to each year.

Frequently Asked Questions

What is the NVIDIA Vera Rubin platform?

Vera Rubin is NVIDIA's full-stack computing platform with 72 Rubin GPUs, 36 Vera CPUs, ConnectX-9 SuperNICs, and BlueField-4 DPUs delivering 3.6 exaflops of compute. It's designed specifically for agentic AI workloads and ships in the second half of 2026.

How does NVIDIA's Groq acquisition change AI inference?

The 256 Groq LPUs per rack handle token decode at 35x better tokens-per-watt, while Rubin GPUs handle prefill. Dynamo orchestrates the split. This fundamentally changes inference economics for agent-heavy workloads by matching each phase to purpose-built hardware.

What is NVIDIA Dynamo?

Dynamo 1.0 is NVIDIA's inference operating system for managing heterogeneous compute across GPUs, LPUs, and DPUs. It delivers a 7x inference boost on current Blackwell hardware and serves as the orchestration layer for disaggregated inference on Vera Rubin.

What are NemoClaw and OpenClaw?

NemoClaw is NVIDIA's runtime for agentic AI — handling agent tool use, planning, and execution. OpenClaw is the open-source variant. The underlying model, Nemotron 3, is already in production at CrowdStrike, Cursor, Perplexity, and ServiceNow.

When can I deploy Vera Rubin in the cloud?

Azure will be the first hyperscale cloud provider to deploy Vera Rubin NVL72 systems, with global rollout over the coming months after the H2 2026 ship date. AWS has committed over 1 million GPUs plus Groq LPUs.

What is the Feynman architecture?

Feynman is NVIDIA's 2028 roadmap: a new GPU, LP40 LPU, Rosa CPU, and BlueField-5 DPU built on a 1nm-class TSMC A16 process. It's expected to introduce silicon photonics, replacing copper interconnects with light-based data transmission.

How does GTC 2026 compare to CES 2026?

CES 2026 laid the Physical AI thesis with Cosmos models and autonomous vehicle partnerships. GTC 2026 delivered the production infrastructure to execute it: Vera Rubin hardware, Dynamo inference OS, NemoClaw agentic framework, and the Groq LPU integration that changes inference economics.

Sources: NVIDIA GTC 2026 Blog, CNBC, Tom's Hardware, StorageReview, NVIDIA Vera Rubin POD Technical Blog, FinancialContent

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

AI Architecture14 min

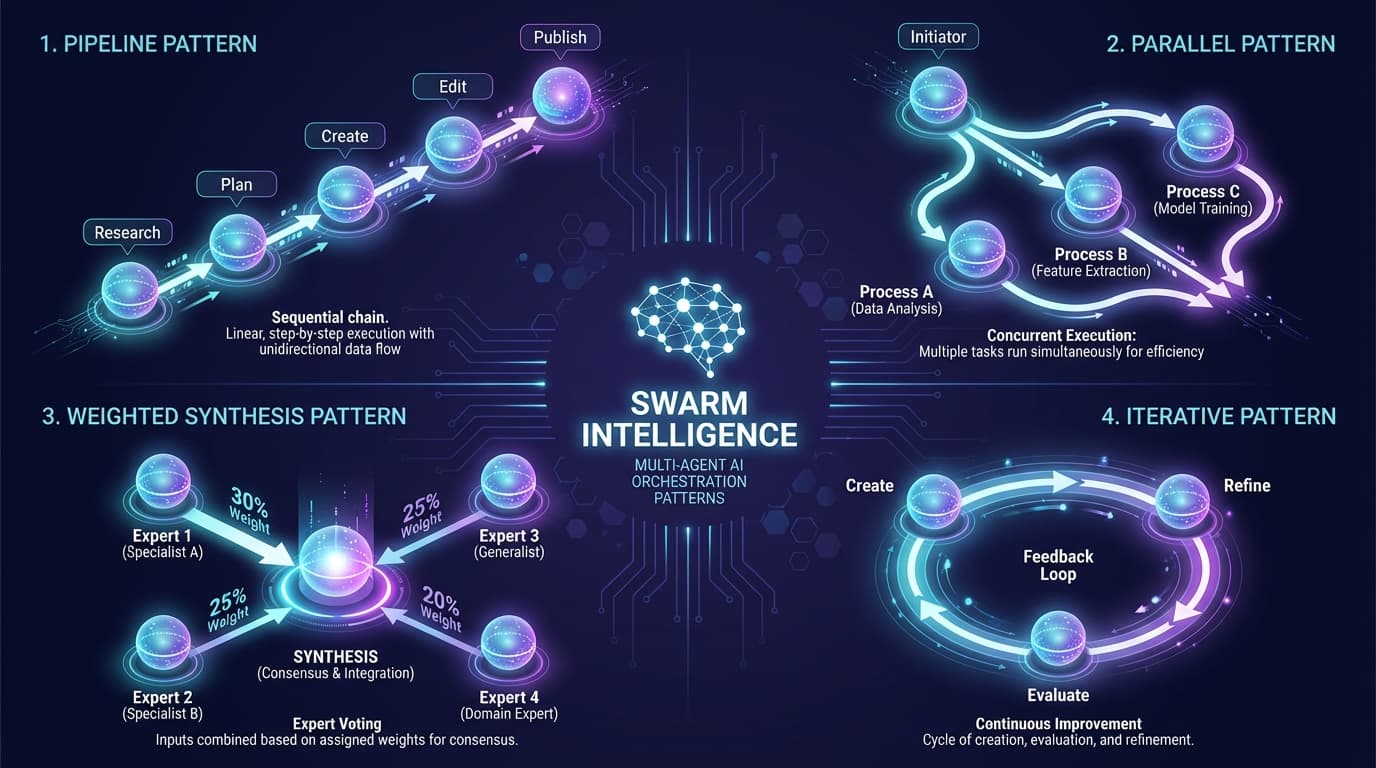

Swarm Intelligence: Multi-Agent Orchestration for Creators

Learn the 4 orchestration patterns that make AI agents work together: Pipeline, Parallel, Weighted Synthesis, and Iterative. Real examples from ACOS production use.

Read article

Intelligence Dispatches7 min read

Nvidia CES 2026: Jensen Huang Declares the 'ChatGPT Moment for Physical AI'

Everything announced at Nvidia's CES 2026 keynote - Rubin platform, Cosmos models, autonomous vehicles, and why physical AI is the next frontier for creators and enterprises.

Read article

AI Architecture10 min

No Bad Parts: What Richard Schwartz Teaches Us About Building Sovereign AI

Most AI agents are built around one voice, one objective, one persona. Human intelligence is not single-agent — and the next generation of agentic systems will not be either. The architectural lesson IFS gives AI.

Read article