Voice AI Agents in 2026: Build Audio-First Experiences

Intelligence DispatchesMarch 15, 202614 min read

Voice AI Agents in 2026: Build Audio-First Experiences

ElevenLabs, Hume, and the tools turning text into natural speech — for podcasts, products, and AI assistants that talk.

🎯

Reading Goal

You will understand the voice AI tools available in 2026, how to build voice-first experiences, and where audio fits in the creator stack.

TL;DR — Voice AI crossed the uncanny valley in 2026. ElevenLabs leads with the most natural text-to-speech and voice cloning at production quality. Hume AI adds emotional intelligence — voices that respond to context with appropriate tone. OpenAI TTS is the cheapest per-character option. For creators, voice AI unlocks: podcast production without recording, product narration at scale, AI assistants that talk, and audio versions of written content. The API costs are now under $5 for a full audiobook chapter.

Audio Became a First-Class Format

I produced my first AI-voiced audio piece in early 2024. It was obviously synthetic — flat intonation, mechanical rhythm, the kind of voice that makes you immediately aware you are listening to a machine.

I ran the same script through ElevenLabs Flash v3 last month. Three colleagues asked which recording studio I used.

That gap — from "obviously synthetic" to "which studio" — is the story of voice AI in 2026. The technology crossed a threshold that changes the economics of audio production entirely.

For creators, the implications are concrete. Written content can become audio content without recording sessions. Podcast episodes can be produced without microphones. Products can speak to users. AI agents can hold conversations that do not feel like navigating an IVR system from 2009.

This is where the GenCreator framework now treats audio as a primary content format, not a secondary adaptation of written work. Let me walk through the tools that make this practical.

ElevenLabs: The Production Standard

ElevenLabs is the benchmark everything else gets measured against. If you are serious about voice AI output quality, you start here.

Text-to-Speech

The flagship product is the TTS API. Feed it text, specify a voice, and receive audio that sounds like a professional narrator. The v3 Flash model balances quality and speed — generation runs at roughly 50x realtime, meaning a 10-minute audio piece generates in under 15 seconds.

The voice library has over 3,000 options across languages, accents, age ranges, and vocal characters. But the real capability is in the voice parameters: stability, similarity boost, style exaggeration, and speaker boost. These four settings let you dial in the exact presentation — whether you need a measured, authoritative tone for documentary narration or an expressive, dynamic voice for storytelling.

Prompt engineering for voice: This is where most people underuse ElevenLabs. The output quality correlates directly with how much structural information you embed in the text. Punctuation matters — em dashes create natural pauses, ellipses signal trailing thought, sentence length controls pace. SSML tags give you even more control: <break time="0.5s"/> for deliberate pauses, <prosody rate="slow"> for emphasis sections.

Voice Cloning

The professional-grade feature. ElevenLabs Professional Voice Clone takes 30 minutes of clean audio and produces a voice model that passes for the original with most listeners. The Instant Voice Clone works with just 1 minute of audio — lower fidelity, but sufficient for internal tooling or draft production.

Practical application: clone your own voice once, then generate audio at scale without scheduling recording sessions. For a creator publishing three audio pieces per week, this eliminates 3-5 hours of studio time monthly.

Dubbing

ElevenLabs Dubbing translates audio content into other languages while preserving the original speaker's voice characteristics. A YouTube video in English becomes a Spanish version in the narrator's voice, not a generic translated voice. For creators building international audiences, this is the most underutilized feature in the product.

Pricing: Free tier (10,000 characters/month). Creator $22/month (100,000 characters). Pro $99/month (500,000 characters). API access from Creator tier. Character costs work out to roughly $0.30-0.50 per 1,000 characters at Pro tier.

Hume AI: When Tone Matters

Hume takes a different approach. Where ElevenLabs optimizes for naturalness and voice quality, Hume focuses on emotional intelligence — generating speech that matches the emotional register of the content.

The EVI (Empathic Voice Interface) model reads the emotional context of what is being said and adjusts prosody, pacing, and intonation accordingly. A sentence expressing concern sounds concerned. A sentence delivering good news has the right uplift. Frustration, enthusiasm, uncertainty — the voice carries the appropriate signal.

Why This Matters for Agents

Standard TTS sounds natural but emotionally flat. For a voice assistant that is reading back a schedule, flat is fine. For a customer service agent handling a complaint, or an educational tutor responding to a student's confusion, flat is the wrong register entirely.

Hume closes this gap. When you build a voice agent with Hume's EVI — and wire it into a system prompt that defines its character and purpose — the agent communicates with appropriate emotional calibration.

I have tested this in a product demo context. The Hume-powered assistant responding to "I'm not sure I understand this" sounds genuinely patient and clarifying. The same response from a standard TTS stack sounds like text being read out loud.

The Research Foundation

Hume's approach is built on their emotion measurement research — they trained models on 53 distinct emotional states from large-scale human audio data. The output is not rule-based ("play emotion X when keyword Y appears"). It is a learned model that infers appropriate emotional register from semantic content.

Pricing: API access from $0.07/minute of audio generated. Enterprise plans for higher volume. The per-minute model makes cost estimation straightforward for voice agent applications.

OpenAI TTS: The Budget Option

OpenAI offers TTS through the same API as their language models. Six voices (Alloy, Echo, Fable, Onyx, Nova, Shimmer), two model tiers (tts-1 for speed, tts-1-hd for quality), and per-character pricing that is the lowest in the market for the quality delivered.

Why it matters: At $0.015 per 1,000 characters (tts-1) or $0.030 per 1,000 characters (tts-1-hd), a full audiobook chapter of 10,000 characters costs $0.15 to $0.30. An hour of audio across typical narration pacing (130-150 words per minute, ~650-750 characters per minute) runs under $2. Those numbers make audio production economically viable at scale in ways that were not true 18 months ago.

Quality comparison: OpenAI TTS is not at ElevenLabs quality for naturalness or prosody control. But it is good enough for many applications — internal tooling, draft audio, high-volume content where per-character cost matters more than maximum quality.

Best for: Prototyping voice applications, internal AI agent tools, budget-constrained production, situations where API simplicity matters (one vendor, one key, one billing relationship).

Additional Tools Worth Knowing

PlayHT 3.0

PlayHT focuses on ultra-low-latency streaming TTS — sub-200ms first-token latency. For real-time voice applications where response speed is critical, this is the relevant differentiator. The voice quality is competitive with ElevenLabs for conversational use cases.

Deepgram

Deepgram leads on the transcription side (speech-to-text), but their Aura TTS model has matured significantly. For applications requiring both transcription and synthesis — real-time call analysis, voice agent conversation loops — building on Deepgram reduces integration complexity.

Resemble AI

Strong voice cloning with real-time synthesis capabilities. The Resemble Localize feature handles voice-cloned multilingual output, competing directly with ElevenLabs Dubbing. Pricing is more enterprise-oriented.

Tool Comparison Table

| Tool | Quality | Latency | Voice Cloning | Emotional Intelligence | Price/1K chars |

|---|---|---|---|---|---|

| ElevenLabs v3 | ★★★★★ | Fast | Pro-grade | Basic | ~$0.40 |

| Hume EVI | ★★★★☆ | Medium | Limited | ★★★★★ | $0.07/min |

| OpenAI TTS-HD | ★★★★☆ | Fast | None | None | $0.030 |

| OpenAI TTS | ★★★☆☆ | Fastest | None | None | $0.015 |

| PlayHT 3.0 | ★★★★☆ | Fastest | Available | Basic | ~$0.35 |

| Resemble AI | ★★★★☆ | Fast | Pro-grade | None | Enterprise |

Use Cases for Creators

Podcast Production Without Recording

The workflow: write your episode as a structured script, optimize for spoken delivery (short sentences, natural transitions, clear paragraph breaks), run through ElevenLabs with your cloned voice, add music and sound design in Descript or Adobe Audition.

I have produced three audio essays this way. The production time per episode: 2-3 hours (writing, prompting, editing) versus 5-8 hours (writing, recording, editing, re-recording sections that needed correction). The audio quality is indistinguishable from a well-produced recording in a quiet room.

The caveat: voice cloning requires clean source audio. If you have never recorded properly, invest in one recording session to create your voice model. A decent USB microphone and a quiet room produce sufficient source material.

Product Narration at Scale

Product pages, onboarding flows, tutorial videos — all of these benefit from consistent, professional narration. AI TTS delivers that consistency across 50 product pages without scheduling 50 recording sessions.

The practical workflow: write narration scripts as part of the content production process, tag them for TTS generation, batch-process via API, attach audio files to the corresponding content. Automation handles the repetitive work. Editorial judgment handles the script quality.

AI Assistants That Talk

The most significant unlock is voice-enabled AI agents. Text-based AI agents are useful. Voice-enabled agents are natural.

The architecture: user speech → Deepgram transcription → language model response → ElevenLabs synthesis → audio output. The full loop can run in under 3 seconds end-to-end with current infrastructure. For conversational applications — customer support, educational tutoring, personal assistant interfaces — this latency is within the threshold of natural conversation.

Hume EVI simplifies this architecture by handling the synthesis layer with emotional intelligence built in. The tradeoff is less control over voice customization.

Audio Versions of Written Content

Every article on frankx.ai is a candidate for audio conversion. The production cost per article: under $1. The distribution opportunity: podcast feeds, audio newsletters, accessibility for readers who prefer listening.

The pattern I follow: publish the written version, run the MDX content through a preprocessing step that strips markdown formatting, feed clean text to ElevenLabs API, upload the audio file, link from the article. Total additional work per article: under 5 minutes, most of it automated.

Pricing Breakdown: Full Production Scenarios

Audio blog post (2,000 words, ~12,000 characters):

- ElevenLabs Creator: ~$0.048 (within monthly allotment)

- ElevenLabs API Pro: ~$4.80

- OpenAI TTS-HD: ~$0.36

Full podcast episode (5,000 words, ~30,000 characters):

- ElevenLabs Creator: $0.12 (within monthly allotment)

- ElevenLabs API Pro: ~$12

- OpenAI TTS-HD: ~$0.90

Audiobook chapter (10,000 words, ~60,000 characters):

- ElevenLabs Pro plan: ~$24

- OpenAI TTS-HD: ~$1.80

Voice agent (per conversation, average 5 minutes):

- Hume EVI: ~$0.35

- ElevenLabs streaming: ~$1.50-2.00

- OpenAI TTS realtime: ~$0.50

The cost structure favors written content conversion (cheap) over real-time voice agents (more expensive but still viable). Both are economically accessible at scales that were not possible before 2025.

Voice Cloning Ethics

This is the part of voice AI that requires explicit address rather than a footnote.

Voice cloning is a dual-use capability. The same technology that lets you clone your own voice for production efficiency can be used to create unauthorized voice content of other people. The platforms all have policies against this — ElevenLabs requires consent verification for cloning voices that are not the account holder's. But policy enforcement is imperfect.

Three clear rules I follow:

Only clone voices you own or have explicit permission to clone. Your own voice: clear. A collaborator who has given written consent: clear. A public figure or creator without permission: not acceptable.

Label AI-generated audio when appropriate. For clearly fictional or experimental content, disclosure may be optional. For content that could be mistaken for a real person's statement, disclosure is required. The standard: if a listener might form false beliefs about who said something, label it.

Do not use voice cloning to impersonate in contexts where impersonation causes harm. This includes financial fraud, political disinformation, and harassment. These are obvious violations — but stating them explicitly matters in a space where the technology moves faster than the norms.

The ethical use of voice AI — cloning your own voice, producing audio from your own writing, building products with appropriate consent — is straightforward and creates real value. The same research that makes this possible can be misused. Being explicit about the distinction is part of responsible use.

Connecting Voice to the GenCreator Framework

The GenCreator framework treats audio as one of seven content dimensions. In practice, this means audio is not an afterthought — it is planned alongside written and visual content from the beginning of a content cycle.

The integration points:

Content pipeline: Scripts are written with dual-mode delivery in mind — structured for both reading and listening. Sentence length, vocabulary choices, and paragraph rhythm are tuned for spoken delivery.

Automation layer: n8n workflows handle the batch conversion of written articles to audio files. The same pipeline covered in the Suno music production workflow extends to voice AI — both use API triggers and file management automation.

Distribution: Audio files feed into a podcast RSS feed, allowing blog content to reach audiences who prefer listening. The same content, two formats, one production process.

Prompt library: The prompt library includes voice script optimization prompts — formatting written content for TTS delivery, structuring scripts for specific voice models, and calibrating emotional beats for Hume's EVI model.

The research hub at /research/ai-creative-tools tracks voice AI developments, model updates, and pricing changes as the market evolves rapidly.

What Comes Next

Three developments on the near-term horizon:

Real-time multilingual conversation. ElevenLabs and Hume are both building toward real-time voice translation with preserved voice identity. You speak in English, the agent responds in the user's language in a voice that sounds like yours. For global products, this eliminates the localization bottleneck.

Voice as interface layer. As voice quality improves and latency drops, voice becomes a primary interface for AI tools — not just a feature. The friction of typing queries will drive many users toward voice interaction, especially on mobile. Products that build voice-first will have an interaction model advantage.

Personalized voice agents. The next step past consistent voice quality is adaptive voice quality — agents that learn individual user preferences for pacing, formality, and emotional register and adjust over time. Hume's research direction points here. The technical foundation exists; the production implementation is 12-18 months out.

Voice AI in 2026 is not a future technology. It is a present capability, with production APIs, reasonable pricing, and quality that clears the bar for creator and product use cases. The window for early adoption advantage is still open.

FAQ

Is ElevenLabs worth it over OpenAI TTS?

For audio that audiences will hear — podcasts, narration, product features — yes. ElevenLabs quality is meaningfully better for natural prosody, emotional range, and voice customization. OpenAI TTS is the right choice for internal tooling, draft content, or high-volume applications where cost per character matters more than maximum naturalness.

How much source audio do I need to clone my voice?

ElevenLabs Instant Voice Clone works with 1 minute of clean audio. Professional Voice Clone requires 30+ minutes. For production-quality cloning that holds up across long-form narration, collect 1-2 hours of clean source material — this produces a model that stays consistent across varied text and emotional registers.

Can I use a cloned voice in commercial products?

With your own voice: yes, with standard commercial licensing from your TTS provider. With another person's voice: you need their explicit written consent and should review the platform's terms for commercial use. ElevenLabs has a rights management system for this. Check the current terms before shipping any commercial product built on a cloned voice.

What is the quality difference between tts-1 and tts-1-hd from OpenAI?

tts-1-hd is noticeably more natural — better prosody, more variation in intonation, less robotic rhythm. The price doubles ($0.015 vs $0.030 per 1,000 characters). For most content at scale, tts-1-hd is worth the premium. Use tts-1 for internal tools where latency matters more than quality.

How do voice agents compare to text chat for AI assistants?

For use cases with screen access and complex information exchange, text is more efficient — the user can skim, copy, and reference. For mobile, hands-free, or conversational contexts, voice is more natural and faster for simple queries. The best products are multimodal — default to the interface that fits the context, not one or the other exclusively.

Related resources: GenCreator production framework | AI creative tools research | Suno music production: 12,000 songs of lessons | Prompt library

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

AI & Systems9 min read

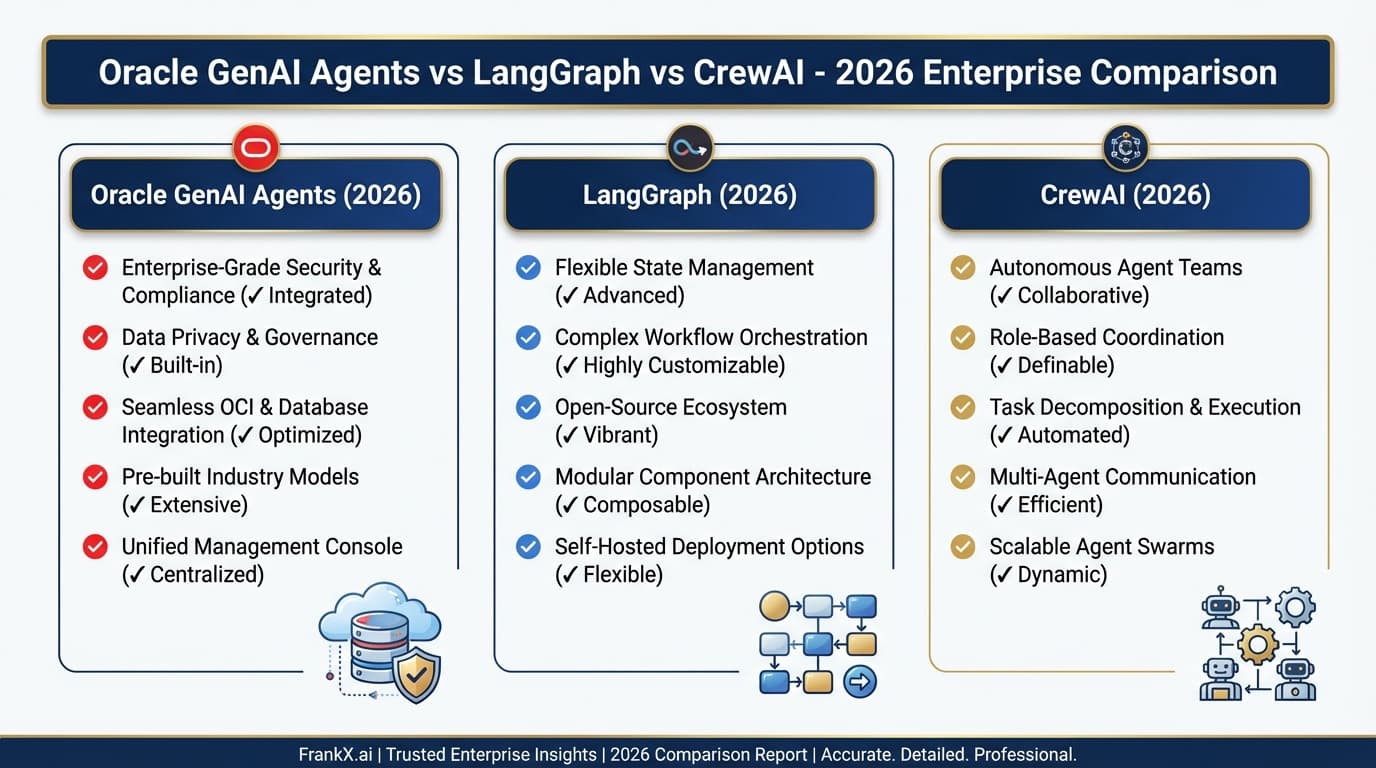

Oracle GenAI Agents vs LangGraph vs CrewAI: Enterprise AI Agent Comparison 2026

A practical comparison of OCI GenAI Agents, LangGraph, and CrewAI for enterprise deployments. Features, pricing, and decision framework from an AI architect's perspective.

Read article

AI Architecture9 min read

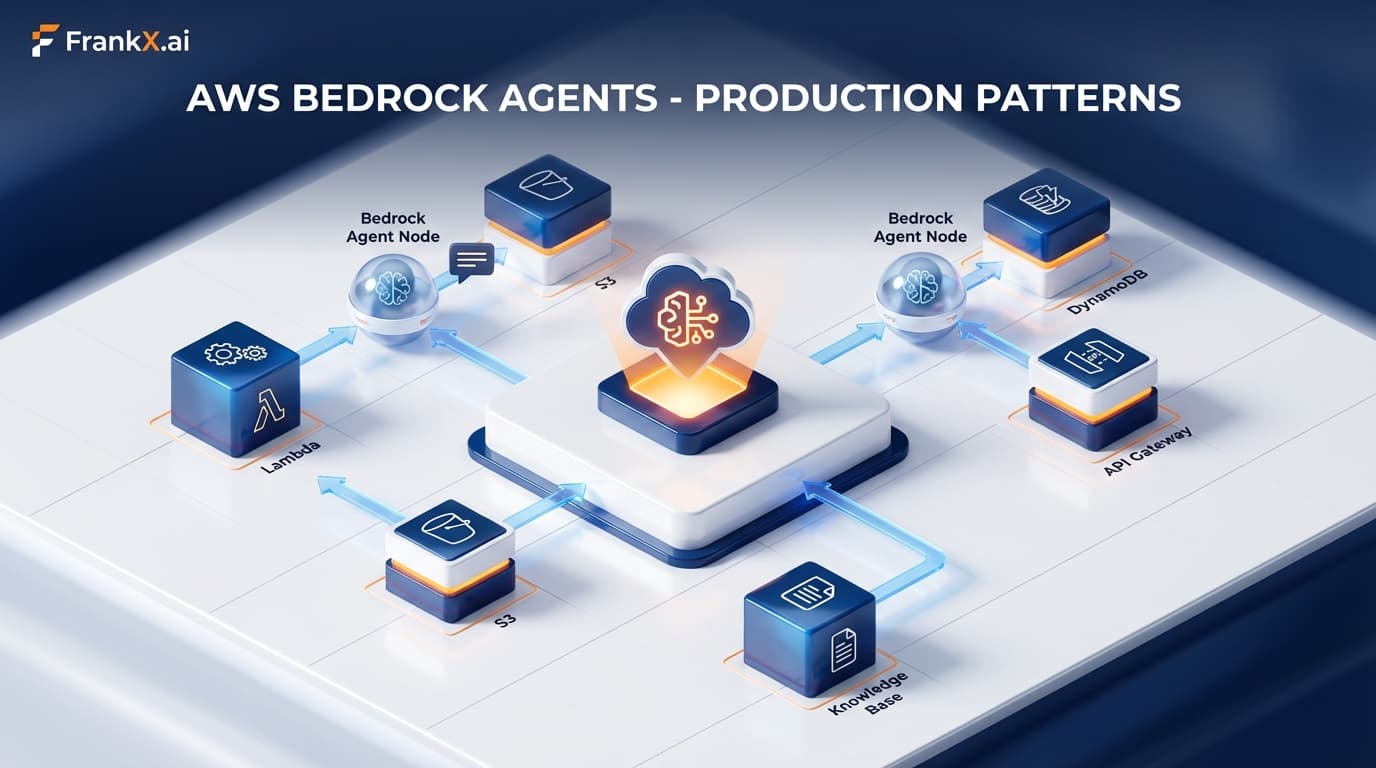

AWS Bedrock AgentCore Deep Dive: Production Patterns for Enterprise AI Agents

A comprehensive guide to building production-ready AI agents on AWS using Bedrock, AgentCore, and the Strands framework. Learn the architectural patterns, security controls, and operational best practices that power enterprise agent deployments.

Read article

AI Architecture9 min read

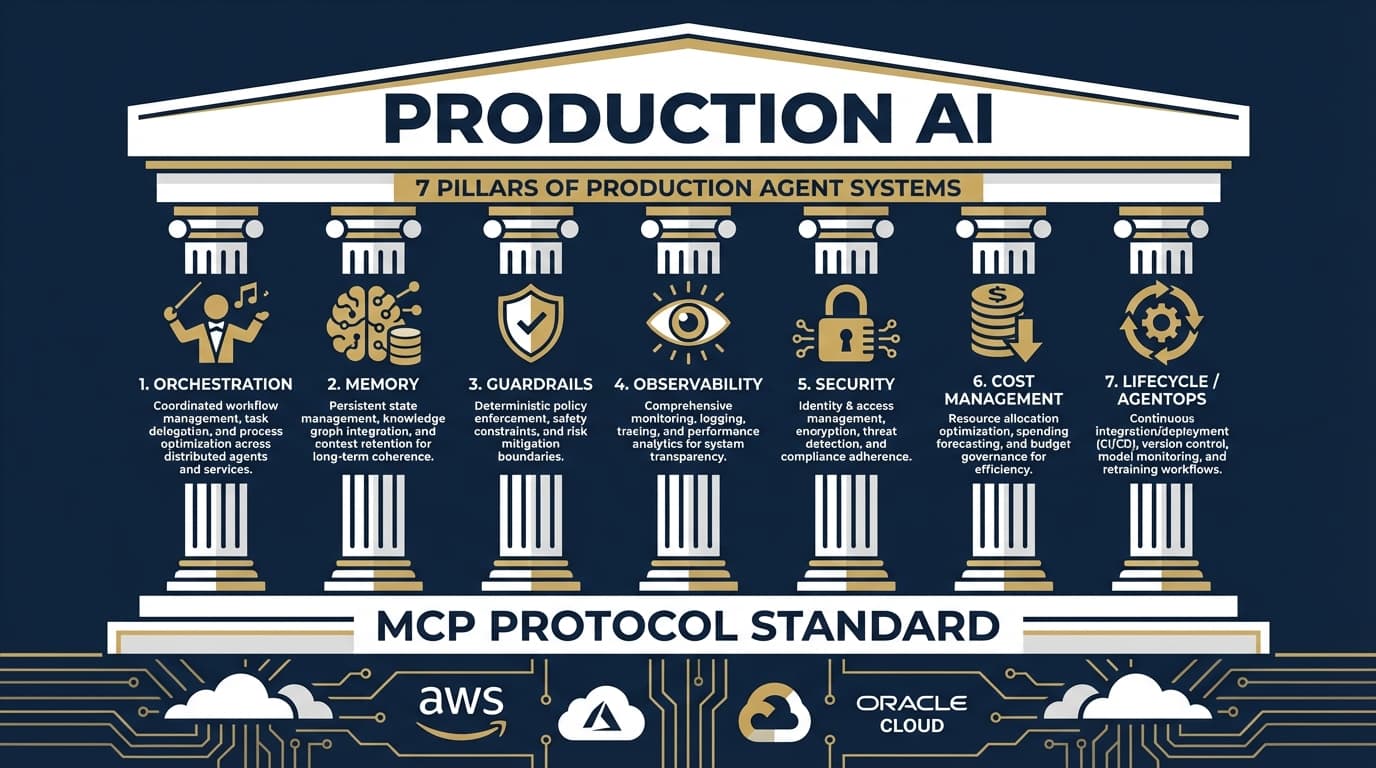

The 7 Pillars of Production Agent Systems: What Actually Matters in 2026

2026 marks the shift from AI demos to production deployments. Here's the architectural framework that emerged from analyzing AWS, Azure, Google Cloud, OpenAI, Anthropic, and Oracle's approaches to production-ready AI agents.

Read article