AI Agent Memory: From Chat History to Persistent Systems

Intelligence DispatchesMarch 16, 20264 min read

AI Agent Memory: From Chat History to Persistent Systems

How to give AI agents memory that persists across sessions — CLAUDE.md, mem0, ChromaDB, and the architecture that makes agents smarter over time.

🎯

Reading Goal

You will understand the memory architecture for AI agents — from ephemeral chats to persistent systems that compound knowledge across sessions.

TL;DR: Most AI conversations start from zero. Agent memory fixes this with four layers: session memory (ephemeral), project memory (CLAUDE.md — persistent in the codebase), user memory (mem0 — cross-session facts), and knowledge memory (ChromaDB — semantic search). Together they create agents that compound — getting smarter with every session.

The Problem

Open a new AI chat. Ask it to help with your project. Watch it ask you to explain everything from scratch.

I run a complex setup: a Next.js site, an agent orchestration system, 65+ music tracks, n8n workflows, and a two-repo architecture. Every session, I used to spend the first five minutes re-establishing context. Which repo is production. What voice rules apply. That my name is Frank Riemer.

That is solved now. Here is how.

The 4 Layers

Layer 1: Session Memory

The conversation context. Ephemeral. Dies when the session ends. Essential while running, gone when it stops.

Layer 2: Project Memory (CLAUDE.md)

Structured files loaded into every session automatically. Persistent in the codebase.

I run three levels:

~/CLAUDE.md— global identityproject/CLAUDE.md— architecture, deployment, brand rulesproject/.claude/CLAUDE.md— agent-level memory, recent activity

My project CLAUDE.md includes: two-repo architecture, voice rules, anti-patterns, decision framework. None of this needs re-explaining each session.

Layer 3: User Memory (mem0)

Cross-session, cross-project facts. Preferences that travel with you.

mem0 via MCP stores retrievable facts: "Frank prefers positive framing." "Use Resend not SendGrid." Open a new project, connect the same mem0 instance, and Claude already knows who you are.

I also use Claude Code's auto-memory system — structured topic files in /home/frankx/.claude/projects/.../memory/. MEMORY.md as the index. Topic files for music production, architecture, visual intelligence.

Layer 4: Knowledge Memory (ChromaDB/RAG)

Semantic search over documents. You embed your content, store as vectors, and retrieve relevant chunks on demand.

Unlike CLAUDE.md (loaded in full), a vector database holds thousands of documents and retrieves only the relevant ones. You do not pay context cost for irrelevant information.

How Memory Compounds

Session 1: Establish that production repo is .worktrees/vercel-ui-ux. Agent writes to CLAUDE.md.

Session 5: Push back on a code pattern. Agent stores a preference.

Session 20: Agent knows architecture, preferences, anti-patterns, and domain context. Fewer wrong assumptions. Faster work.

This is information architecture applied to agent systems. Same principle as a well-maintained wiki over scattered notes.

What to Store vs What Not

Store: Preferences, project context, architectural decisions, feedback, reference links.

Skip: Code patterns (read the code), git history (use git log), ephemeral task state, credentials (plaintext risk).

Memory and the Personal AI CoE

In the Personal AI CoE framework, memory is Domain 2: Knowledge and Research. Enterprise AI CoEs solve this with knowledge management teams. The personal version: CLAUDE.md + auto-memory + local vector database. Same architecture. Fraction of the cost.

ACOS uses memory as the substrate that makes the agent network coherent. Without shared memory, each agent operates in isolation. With it, they hand off context and build on each other's work.

The Starlight Intelligence System goes deeper on how knowledge accumulates across agent networks.

FAQ

CLAUDE.md vs mem0?

CLAUDE.md is project-specific, version-controlled. mem0 stores user-level facts that persist across projects. Use both.

How much in CLAUDE.md?

200-500 lines of high-signal content. Enough to brief a collaborator, not document the project.

Can the agent write to memory?

Yes. Claude Code auto-memory writes during sessions. mem0 via MCP allows explicit writes. I review CLAUDE.md changes manually before committing.

What about stale memory?

Stale memory is worse than none — confident wrong information. Review quarterly. Archive what no longer applies.

How to start from zero?

Create a CLAUDE.md. Add the 3 things you explain most at session start. Use it for a week. Add what is still missing. Layer in auto-memory after the first month.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Workshops12 min

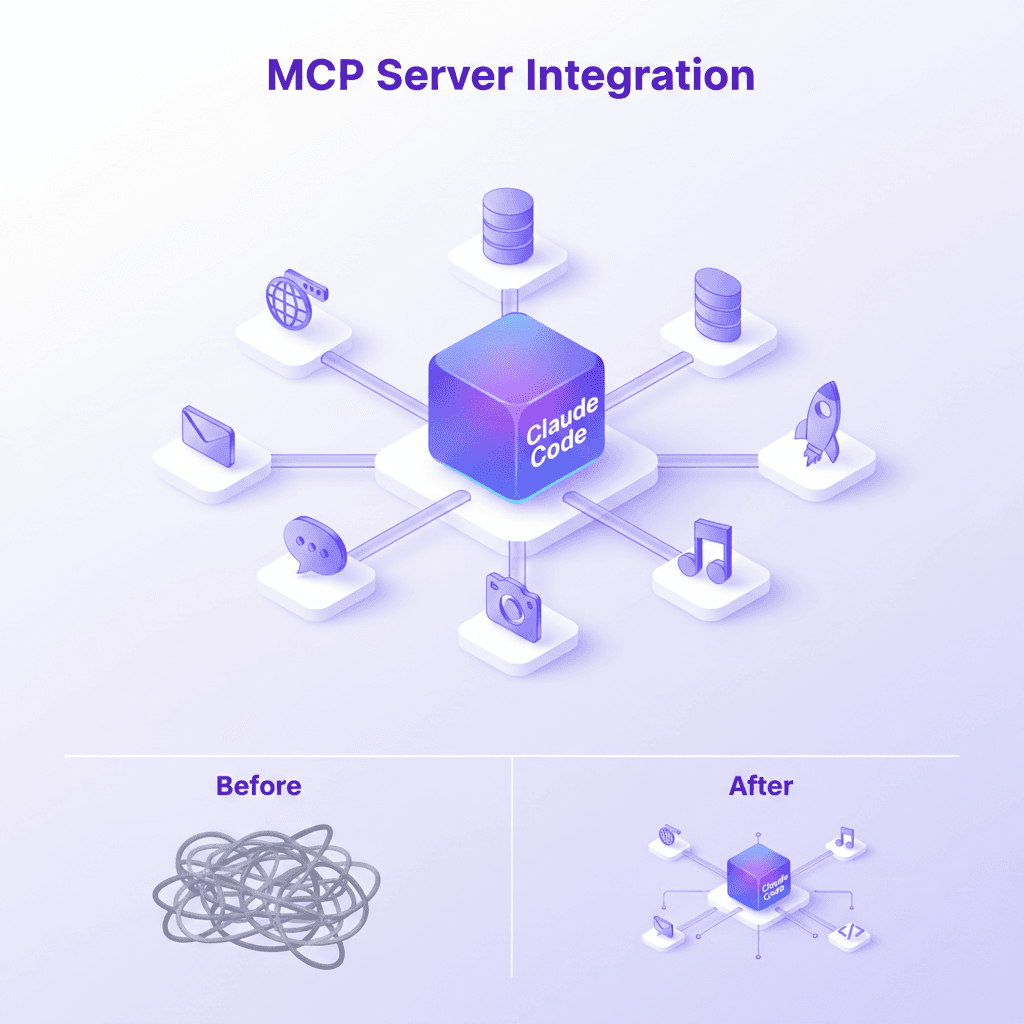

Build Your First MCP Server: The Model Context Protocol Workshop

Learn to build production-grade MCP servers that connect AI to your data. Master resources, tools, and prompts with the open standard revolutionizing AI integration.

Read article

Creator Systems6 min read

How I Configured Claude Code with ACOS and 21 MCP Servers

Step-by-step guide to building a production-grade AI coding environment with ACOS, Claude Code, MCP servers, and custom agent workflows. From zero to shipping.

Read article

Intelligence Dispatches11 min read

MCP Server Guide 2026: ClawHub, Smithery, and What to Install

MCP servers, registries, and the protocol connecting AI assistants to databases, APIs, browsers, and developer tools. Includes my 21-server production stack.

Read article