AI Workshops for Students: What Actually Worked

Creator SystemsMarch 14, 202612 min read

AI Workshops for Students: What Actually Worked

Lessons from building an AI education system for students — the tools, the curriculum, and what 2026 learners actually need to know.

🎯

Reading Goal

You will know how to structure AI education for students — which tools to teach first, which concepts matter, and how to build progressive skill paths.

TL;DR: I built an AI education ecosystem on frankx.ai — a student hub, 5 workshops, a professor toolkit, an AI briefing page, and a CoE builder tool. The lesson: students do not need to learn every AI tool. They need three capabilities — prompt engineering, workflow automation, and critical evaluation of AI output. Everything else builds on those. This post covers what worked, what failed, and the curriculum structure I would use again.

Why I Built This

I spend my days at Oracle designing AI Center of Excellence frameworks for enterprises — Fortune 500 companies paying for the same six-pillar architecture that I eventually realized individuals could run at a fraction of the cost. The same insight applies to education.

Universities are still running AI electives. Optional. Siloed in computer science departments. A student studying law, journalism, architecture, or biology gets almost no structured AI education — even as every one of those fields is being reshaped by the tools they are not being taught.

The gap is not about access to tools. Students have access to Claude, ChatGPT, Gemini. The gap is structured learning — a progression from "I can use this" to "I can build with this" to "I can evaluate this critically."

I built the frankx.ai student ecosystem to fill that gap. Not as a university course. As a free, self-directed system anyone could work through in 90 days.

Here is what I learned.

The Ecosystem: What Got Built

The student hub is the entry point — an orientation page with four tracks: Foundation, Builder, Creator, and Researcher. Each track has a recommended path through the available resources.

The five workshops cover the practical stack:

- Personal AI Assistant Setup — Building a Claude-based assistant with memory and custom instructions

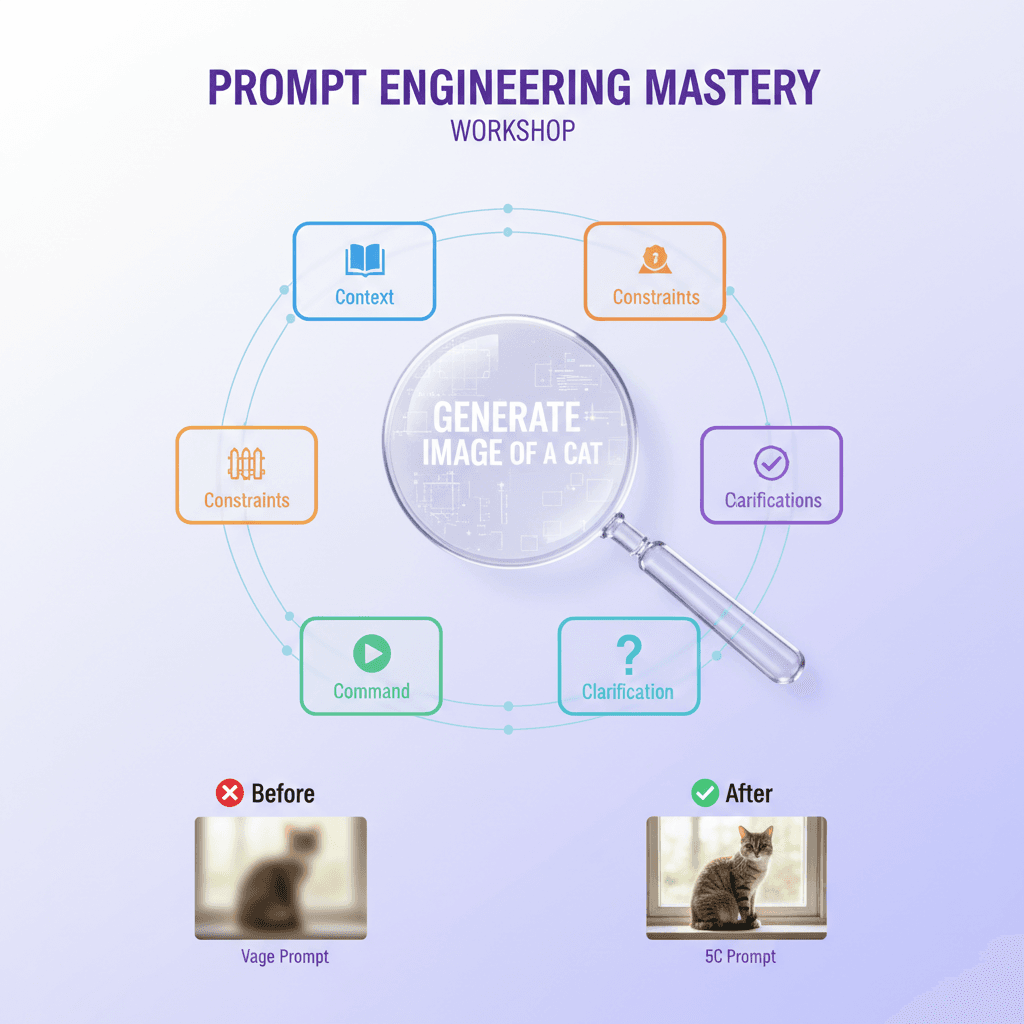

- Prompt Engineering Mastery — Structured techniques: chain-of-thought, role framing, constraint-based prompting

- AI Music Creation — Suno for non-musicians. Creating, iterating, and publishing original tracks

- MCP Server Architecture — Connecting AI to real tools via Model Context Protocol

- Creators AI Toolkit — Combining Claude, Suno, and automation into a working creative pipeline

The AI briefing page is a living resource — updated monthly with the most important AI developments framed for students: what changed, why it matters for their field, what to do with it. Not news aggregation. Filtered signal.

The professor toolkit gives educators structured workshop materials, rubrics for assessing AI work, and a guide for integrating AI into existing syllabi without making it "the AI class."

The AI CoE builder is a tool that takes students through a 20-question assessment and outputs a personalized AI Center of Excellence plan — the same six-pillar framework I build for enterprises, scaled to an individual learner.

The Three Capabilities That Actually Matter

After running students through 5 workshops and watching where they got stuck and where they accelerated, I reduced the entire curriculum to three core capabilities. Every workshop now builds toward at least one of them.

Capability 1: Prompt Engineering

Not "writing better prompts." Structured prompt construction — understanding that a prompt is a specification, not a request.

Students who grasp this write prompts that are:

- Role-scoped: "You are a legal researcher reviewing contracts for ambiguous liability clauses."

- Constraint-bounded: "Output in JSON. Maximum 200 words. Include confidence score."

- Iteration-aware: They expect the first output to be a draft, not a final answer.

Students who miss this treat AI like a search engine. They type a vague question, get a mediocre output, and conclude the tool is limited. The tool is fine. The specification was incomplete.

This is teachable in 90 minutes. The Prompt Engineering Mastery workshop does it in 60, with 30 minutes of hands-on practice building a system prompt for a personal AI assistant.

Capability 2: Workflow Automation

The difference between a student who uses AI and a student who builds with AI is automation. Using AI means opening a chat window. Building with AI means creating a system where tasks flow through AI without requiring constant manual input.

The entry point is n8n — an open-source workflow automation tool that connects AI models, APIs, and data sources without requiring deep programming knowledge. A student can build a workflow that monitors a research topic, summarizes new developments daily, and sends them a briefing — in about two hours.

That shift from consumer to builder is one of the most visible transformations in the student cohorts. Once students see that AI can be wired into repeating processes, the way they think about their own workflows changes.

The MCP Server Architecture workshop introduces this at the protocol level — how AI models connect to external tools. Not every student needs to build MCP servers. But understanding the architecture changes how they think about what AI can reach.

Capability 3: Critical Evaluation

This is the capability most curricula skip. They teach students to produce AI output. They do not teach students to assess it.

Critical evaluation means asking: Is this accurate? Is this the best approach for this context? What would this output miss? Is the confidence this model projects warranted?

I run a specific exercise in the Foundation workshop: take three AI-generated outputs on the same topic, identify where each one differs, locate the specific claims that cannot be verified, and write a brief assessment of which output is most reliable and why.

Students who do this exercise once start doing it automatically. They stop accepting AI output as finished product. They treat it as a draft from a capable but imperfect collaborator — which is exactly what it is.

Workshop Formats: What Worked

Hands-on beats lecture by a wide margin. The workshops that got the highest engagement were the ones where students were building something within the first ten minutes. The ones that started with theory and moved to practice lost students at the theory stage.

The structure that worked: 5-minute context (why this matters), 10-minute demonstration, 30-minute guided build, 15-minute extension challenge.

30-minute builds outperform 2-hour theory blocks. When I first designed the MCP Server Architecture workshop, it was a 2-hour deep technical session. Completion rate was low. I restructured it: a 30-minute core build (connect Claude to a live API endpoint), then optional extension modules for students who wanted to go further. Completion rates went up significantly.

The constraint of a real deliverable clarifies everything. The best learning happened when students were working toward something they could actually use. Not a test. Not an exercise. A tool they would keep running after the workshop ended.

The Personal AI Assistant workshop ends with students having a working Claude setup with custom memory and instructions they wrote themselves. The Creators AI Toolkit workshop ends with a functioning automation that runs without them. Real outputs, not homework.

Cohort learning creates accountability without bureaucracy. Students who went through workshops as part of a small group — even informally, a few people working through the same material in the same week — had higher completion and better retention. The accountability was social, not structural.

The Progressive Curriculum

The curriculum has three tracks. Students assess their starting point using the AI CoE builder and enter at the right level.

Foundation Track (Weeks 1-4)

Entry point for students with no structured AI background. Covers:

- What AI models can and cannot do (capability mapping, not hype)

- Prompt construction fundamentals

- Critical evaluation framework

- Personal AI assistant setup

Output: A working personal AI assistant and a written AI capability map for their field.

Builder Track (Weeks 5-8)

For students who have Foundation or equivalent background. Covers:

- Workflow automation with n8n

- AI music and media creation (Suno)

- Connecting AI to external tools via APIs

- Building a first automation pipeline

Output: One running automation that solves a real problem in their workflow.

Master Track (Weeks 9-12)

For students building AI-native projects or preparing for professional environments. Covers:

- MCP server architecture and tool integration

- Multi-model workflows (combining Claude, image models, audio models)

- AI governance and evaluation frameworks

- Personal AI CoE design

Output: A personal AI CoE plan and one working multi-model system.

The tracks are not rigid. Students move between them based on what they are building. The assessment tool surfaces where they are, not where they are supposed to be.

Tools to Teach First

The tool selection question comes up in every professor conversation. There are hundreds of AI tools. What do you start with?

The answer I arrived at: start narrow, go deep.

Start with Claude. Not because it is the best model in every benchmark — because the Claude interface rewards structured thinking. It responds to constraint and role-framing in ways that make good prompt engineering visible to learners. Students see the difference between a vague prompt and a structured one immediately.

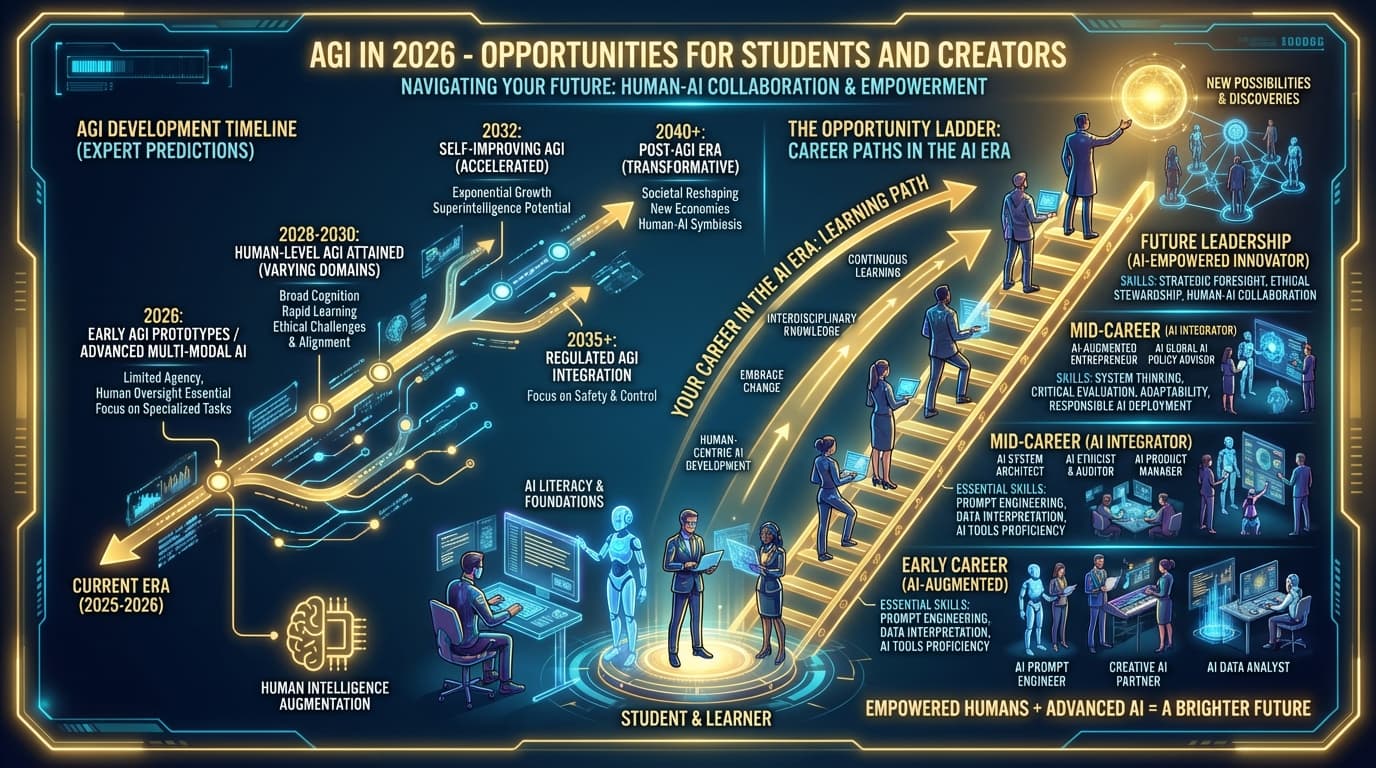

Add Suno second. This surprised me. Music creation is the fastest path to understanding AI as a creative collaborator rather than a replacement. Students who would never engage with code-based AI tools spend hours iterating on Suno tracks. And the skills transfer: iteration, specificity, understanding model tendencies. The AI opportunities post for students and creators covers why creative AI literacy matters as much as technical AI literacy.

Introduce Claude Code third. For students with any coding background, Claude Code is where AI literacy becomes concrete. You are not just prompting — you are watching an AI navigate a codebase, make decisions, and explain its reasoning. It surfaces AI decision-making in a way that abstract examples do not.

What to defer: specialized tools, narrow vertical applications, anything that requires significant setup before a student sees results. First-session friction kills momentum.

What Failed

Too much theory before hands-on. Any workshop segment longer than 20 minutes without a build task loses students. This is not a student attention problem — it is a curriculum design problem. Theory lands when it explains something students just experienced, not before.

Too many tools at once. One workshop I ran tried to introduce Claude, n8n, Zapier, and a third-party API in a single session. Students who finished it could not describe what any of the tools was for. Four tools in one session is not breadth — it is cognitive overload dressed as ambition.

Assessment without context. Rubrics for AI work that focused on "did the student use AI" rather than "did the student produce something with genuine utility" created the wrong incentives. Students optimized for the rubric, not for the capability.

Ignoring the critical evaluation step. Early workshops celebrated AI-generated output. Later workshops taught evaluation. The students who went through evaluation-first produced better work and trusted AI more — because they understood what it was actually reliable for.

The AI Literacy Gap in 2026

The students I talk to are not afraid of AI. They use it constantly — for essay drafts, for research summaries, for cover letters. But most of them use it the same way they use a search engine. Input query, take output, move on.

That is a narrow slice of what these tools can do. And in a professional environment — especially as organizations build AI-native workflows — the gap between "AI user" and "AI builder" translates directly into opportunity.

The research hub on AI education has the reading list I recommend for students and educators who want the deeper conceptual grounding behind this curriculum. The student hub is where to start if you want to work through it.

The curriculum is not finished. It changes quarterly as the tools change. What does not change: the three core capabilities. Prompt engineering, workflow automation, and critical evaluation. Everything else is context.

FAQ

Which AI tool should students learn first?

Claude. It rewards structured prompt construction, which makes the skills transferable across other models. Start with one tool and go deep before adding others. Two months of consistent Claude use will teach more than two weeks of switching between six tools.

How long does the full curriculum take?

The three-track structure maps to 12 weeks at roughly 4-6 hours per week. Students with prior coding experience or strong AI backgrounds often compress Foundation and move faster through Builder. The AI CoE builder tool helps students find their starting point.

Can professors integrate this into existing courses?

Yes. The professor toolkit is designed for exactly that — individual workshops slot into existing syllabi as single-session modules. The prompt engineering workshop runs in 90 minutes. The personal AI assistant setup runs in 60. Neither requires any course restructuring.

What is the difference between the Foundation and Builder tracks?

Foundation builds understanding: what AI can do, how to direct it, how to evaluate it. Builder builds systems: automations, pipelines, multi-step workflows. A student who completes Foundation can use AI well. A student who completes Builder can make AI work for them without constant manual input.

Is the curriculum technical? Do students need coding skills?

Foundation and Builder are designed to be accessible without coding background. The MCP Server Architecture workshop in the Master track does involve technical concepts, but the hands-on component uses visual configuration rather than raw code. Students with zero coding experience have completed all three tracks.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Workshops12 min

Create Music Without Musical Training: The Suno AI Music Creation Workshop

Transform your ideas into cinematic soundscapes, meditation music, and motivation anthems using AI. Learn the 5-Layer Prompt Architecture and frequency science for transformative audio.

Read article

Workshops15 min

The 5C Framework: Mastering Prompt Engineering for Any AI System

Learn systematic prompt engineering with the 5C Framework. From basic commands to advanced techniques like Chain-of-Thought and Few-Shot Learning. Build your personal prompt template library.

Read article

Workshops10 min

Build Your Personal AI Assistant: The Complete Setup Workshop

Create a customized AI development environment from scratch. Learn to configure Claude Code, build CLAUDE.md files, create custom skills, and connect MCP servers to your workflow.

Read article